Swimmer¶

This environment is part of the Mujoco environments which contains general information about the environment.

Action Space |

|

Observation Space |

|

import |

|

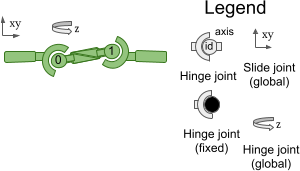

Description¶

This environment corresponds to the Swimmer environment described in Rémi Coulom’s PhD thesis “Reinforcement Learning Using Neural Networks, with Applications to Motor Control”. The environment aims to increase the number of independent state and control variables compared to classical control environments. The swimmers consist of three or more segments (’links’) and one less articulation joints (’rotors’) - one rotor joint connects exactly two links to form a linear chain. The swimmer is suspended in a two-dimensional pool and always starts in the same position (subject to some deviation drawn from a uniform distribution), and the goal is to move as fast as possible towards the right by applying torque to the rotors and using fluid friction.

Notes¶

The problem parameters are: Problem parameters:

n: number of body parts

mi: mass of part i (i ∈ {1…n})

li: length of part i (i ∈ {1…n})

k: viscous-friction coefficient

While the default environment has n = 3, li = 0.1, and k = 0.1. It is possible to pass a custom MuJoCo XML file during construction to increase the number of links, or to tweak any of the parameters.

Action Space¶

The action space is a Box(-1, 1, (2,), float32). An action represents the torques applied between links

Num |

Action |

Control Min |

Control Max |

Name (in corresponding XML file) |

Joint |

Type (Unit) |

|---|---|---|---|---|---|---|

0 |

Torque applied on the first rotor |

-1 |

1 |

motor1_rot |

hinge |

torque (N m) |

1 |

Torque applied on the second rotor |

-1 |

1 |

motor2_rot |

hinge |

torque (N m) |

Observation Space¶

The observation space consists of the following parts (in order):

qpos (3 elements by default): Position values of the robot’s body parts.

qvel (5 elements): The velocities of these individual body parts (their derivatives).

By default, the observation does not include the x- and y-coordinates of the front tip.

These can be included by passing exclude_current_positions_from_observation=False during construction.

In this case, the observation space will be a Box(-Inf, Inf, (10,), float64), where the first two observations are the x- and y-coordinates of the front tip.

Regardless of whether exclude_current_positions_from_observation is set to True or False, the x- and y-coordinates are returned in info with the keys "x_position" and "y_position", respectively.

By default, however, the observation space is a Box(-Inf, Inf, (8,), float64) where the elements are as follows:

Num |

Observation |

Min |

Max |

Name (in corresponding XML file) |

Joint |

Type (Unit) |

|---|---|---|---|---|---|---|

0 |

angle of the front tip |

-Inf |

Inf |

free_body_rot |

hinge |

angle (rad) |

1 |

angle of the first rotor |

-Inf |

Inf |

motor1_rot |

hinge |

angle (rad) |

2 |

angle of the second rotor |

-Inf |

Inf |

motor2_rot |

hinge |

angle (rad) |

3 |

velocity of the tip along the x-axis |

-Inf |

Inf |

slider1 |

slide |

velocity (m/s) |

4 |

velocity of the tip along the y-axis |

-Inf |

Inf |

slider2 |

slide |

velocity (m/s) |

5 |

angular velocity of front tip |

-Inf |

Inf |

free_body_rot |

hinge |

angular velocity (rad/s) |

6 |

angular velocity of first rotor |

-Inf |

Inf |

motor1_rot |

hinge |

angular velocity (rad/s) |

7 |

angular velocity of second rotor |

-Inf |

Inf |

motor2_rot |

hinge |

angular velocity (rad/s) |

excluded |

position of the tip along the x-axis |

-Inf |

Inf |

slider1 |

slide |

position (m) |

excluded |

position of the tip along the y-axis |

-Inf |

Inf |

slider2 |

slide |

position (m) |

Rewards¶

The total reward is: reward = forward_reward - ctrl_cost.

forward_reward: A reward for moving forward, this reward would be positive if the Swimmer moves forward (in the positive \(x\) direction / in the right direction). \(w_{forward} \times \frac{dx}{dt}\), where \(dx\) is the displacement of the (front) “tip” (\(x_{after-action} - x_{before-action}\)), \(dt\) is the time between actions, which depends on the

frame_skipparameter (default is 4), andframetimewhich is \(0.01\) - so the default is \(dt = 4 \times 0.01 = 0.04\), \(w_{forward}\) is theforward_reward_weight(default is \(1\)).ctrl_cost: A negative reward to penalize the Swimmer for taking actions that are too large. \(w_{control} \times \|action\|_2^2\), where \(w_{control}\) is

ctrl_cost_weight(default is \(10^{-4}\)).

info contains the individual reward terms.

Starting State¶

The initial position state is \(\mathcal{U}_{[-reset\_noise\_scale \times I_{5}, reset\_noise\_scale \times I_{5}]}\). The initial velocity state is \(\mathcal{U}_{[-reset\_noise\_scale \times I_{5}, reset\_noise\_scale \times I_{5}]}\).

where \(\mathcal{U}\) is the multivariate uniform continuous distribution.

Episode End¶

Termination¶

The Swimmer never terminates.

Truncation¶

The default duration of an episode is 1000 timesteps.

Arguments¶

Swimmer provides a range of parameters to modify the observation space, reward function, initial state, and termination condition.

These parameters can be applied during gymnasium.make in the following way:

import gymnasium as gym

env = gym.make('Swimmer-v5', xml_file=...)

Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

str |

|

Path to a MuJoCo model |

|

float |

|

Weight for forward_reward term (see |

|

float |

|

Weight for ctrl_cost term (see |

|

float |

|

Scale of random perturbations of initial position and velocity (see |

|

bool |

|

Whether or not to omit the x- and y-coordinates from observations. Excluding the position can serve as an inductive bias to induce position-agnostic behavior in policies (see |

Version History¶

v5:

Minimum

mujocoversion is now 2.3.3.Added support for fully custom/third party

mujocomodels using thexml_fileargument (previously only a few changes could be made to the existing models).Added

default_camera_configargument, a dictionary for setting themj_cameraproperties, mainly useful for custom environments.Added

env.observation_structure, a dictionary for specifying the observation space compose (e.g.qpos,qvel), useful for building tooling and wrappers for the MuJoCo environments.Return a non-empty

infowithreset(), previously an empty dictionary was returned, the new keys are the same state information asstep().Added

frame_skipargument, used to configure thedt(duration ofstep()), default varies by environment check environment documentation pages.Restored the

xml_fileargument (was removed inv4).Added

forward_reward_weight,ctrl_cost_weight, to configure the reward function (defaults are effectively the same as inv4).Added

reset_noise_scaleargument to set the range of initial states.Added

exclude_current_positions_from_observationargument.Replaced

info["reward_fwd"]andinfo["forward_reward"]withinfo["reward_forward"]to be consistent with the other environments.

v4: All MuJoCo environments now use the MuJoCo bindings in mujoco >= 2.1.3.

v3: Support for

gymnasium.makekwargs such asxml_file,ctrl_cost_weight,reset_noise_scale, etc. rgb rendering comes from tracking camera (so agent does not run away from screen). Moved to the gymnasium-robotics repo.v2: All continuous control environments now use mujoco-py >= 1.50. Moved to the gymnasium-robotics repo.

v1: max_time_steps raised to 1000 for robot based tasks. Added reward_threshold to environments.

v0: Initial versions release.